Tuning curves#

With Pynapple you can easily compute n-dimensional tuning curves (for example, firing rate as a function of 1D angular direction or firing rate as a function of 2D position). It is also possible to compute average firing rate for different epochs (for example, firing rate for different epochs of stimulus presentation).

From timestamps or continuous activity#

Computing tuning curves is done using compute_tuning_curves.

When computing from general time-series, mandatory arguments are:

data: aTsGroup(or singleTs) orTsdFrame(or singleTsd) containing the neural activity of one or more units.features: aTsdorTsdFramecontaining one or more features.

By default, 10 bins are used for all features, but you can specify the number of bins,

or the bin edges explicitly, using the bins argument.

The min and max of the tuning curves are by default the minima and maxima of the features.

This can be tweaked with the range argument.

If an IntervalSet is passed with epochs, everything is restricted to epochs,

otherwise the time support of the features is used.

If you do not want the sampling rate of the features to be estimated from the timestamps,

you can pass it explicitly using the fs argument.

You can further also pass a list of strings to label each dimension via feature_names

(by default the columns of the features are used).

The output is an xarray.DataArray in which the first dimension represents the units and further dimensions represent the features.

The occupancy and bin edges are stored as attributes.

If you explicitly want a pd.DataFrame as output (which is only possible when you have just the one feature),

you can set return_pandas=True. Note that this will not return the occupancy and bin edges.

1D tuning curves from spikes#

We will start by simulating some spiking units modulated by a 1D circular variable:

# Feature

T = 500

dt_feature = 0.02

times_feature = np.arange(0, T, dt_feature)

feature = nap.Tsd(

t=times_feature, d=np.pi + np.pi * np.cos(2 * np.pi * times_feature / 10)

)

# Spikes

N = 6

max_rate = 20

dt_spikes = 0.002

feature_interp = feature.interpolate(nap.Ts(np.arange(0, T, dt_spikes)))

centers = np.linspace(0, 2 * np.pi, N, endpoint=False)

rates = max_rate * np.exp(

-10 * (np.sin((feature_interp.d[:, np.newaxis] - centers) / 2)) ** 2

)

tsgroup_1d = nap.TsGroup(

{

i + 1: nap.Ts(

feature_interp.t[np.random.poisson(rates[:, i] * dt_spikes) > 0]

)

for i in range(N)

},

)

We now have all the ingredients to compute tuning curves:

tuning_curves_1d = nap.compute_tuning_curves(

data=tsgroup_1d,

features=feature,

bins=120,

range=(0, 2*np.pi),

feature_names=["feature"]

)

tuning_curves_1d

<xarray.DataArray (unit: 6, feature: 120)> Size: 6kB

array([[19.34482759, 21.41666667, 18.7 , 16.875 , 16.5 ,

15. , 14.16666667, 14.83333333, 14.83333333, 8.75 ,

9.75 , 9.66666667, 6.25 , 6. , 5.25 ,

4. , 2.75 , 3.5 , 2.5 , 1. ,

3.25 , 1.25 , 0. , 1. , 0.75 ,

0.5 , 0.5 , 0.5 , 0. , 0.25 ,

0. , 0. , 0. , 0.25 , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0.5 , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.25 , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.25 , 0.5 , 0. , 0. , 1.5 ,

0.75 , 0.5 , 0.75 , 1.75 , 2. ,

...

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0.25 , 0. ,

0. , 0. , 0. , 0. , 0.25 ,

0.5 , 0. , 0. , 0. , 0.75 ,

0.5 , 0. , 3. , 1. , 0.5 ,

2.5 , 1. , 3.25 , 2. , 3.25 ,

5.5 , 8.25 , 8.5 , 7.25 , 11.5 ,

12. , 13.5 , 11.75 , 16.5 , 18.75 ,

16.75 , 21. , 19. , 22.25 , 18.5 ,

20.5 , 15. , 21.75 , 20. , 17.75 ,

15.5 , 15.25 , 10.5 , 13.83333333, 8. ,

9.5 , 8.33333333, 7. , 6.83333333, 7. ,

4.66666667, 3.625 , 3.3 , 2.33333333, 1.62068966]])

Coordinates:

* unit (unit) int64 48B 1 2 3 4 5 6

* feature (feature) float64 960B 0.02618 0.07854 0.1309 ... 6.152 6.205 6.257

Attributes: (4)The xarray.DataArray can be treated like a numpy array.

It has a shape:

tuning_curves_1d.shape

(6, 120)

It can be sliced:

tuning_curves_1d[1, 2:8]

<xarray.DataArray (feature: 6)> Size: 48B

array([2.6 , 2.25 , 3.16666667, 4.66666667, 5.16666667,

6.83333333])

Coordinates:

* feature (feature) float64 48B 0.1309 0.1833 0.2356 0.288 0.3403 0.3927

unit int64 8B 2

Attributes: (4)It can also be indexed using the coordinates:

tuning_curves_1d.sel(unit=1)

<xarray.DataArray (feature: 120)> Size: 960B

array([19.34482759, 21.41666667, 18.7 , 16.875 , 16.5 ,

15. , 14.16666667, 14.83333333, 14.83333333, 8.75 ,

9.75 , 9.66666667, 6.25 , 6. , 5.25 ,

4. , 2.75 , 3.5 , 2.5 , 1. ,

3.25 , 1.25 , 0. , 1. , 0.75 ,

0.5 , 0.5 , 0.5 , 0. , 0.25 ,

0. , 0. , 0. , 0.25 , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0.5 , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.25 , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.25 , 0.5 , 0. , 0. , 1.5 ,

0.75 , 0.5 , 0.75 , 1.75 , 2. ,

0.5 , 2.75 , 3.75 , 3. , 4.5 ,

5.75 , 5.5 , 9.5 , 7.5 , 7. ,

11.25 , 9.83333333, 12.66666667, 16. , 16.33333333,

19.5 , 18.625 , 19. , 20.66666667, 19.86206897])

Coordinates:

* feature (feature) float64 960B 0.02618 0.07854 0.1309 ... 6.152 6.205 6.257

unit int64 8B 1

Attributes: (4)xarray further has matplotlib

support, allowing for easy visualization:

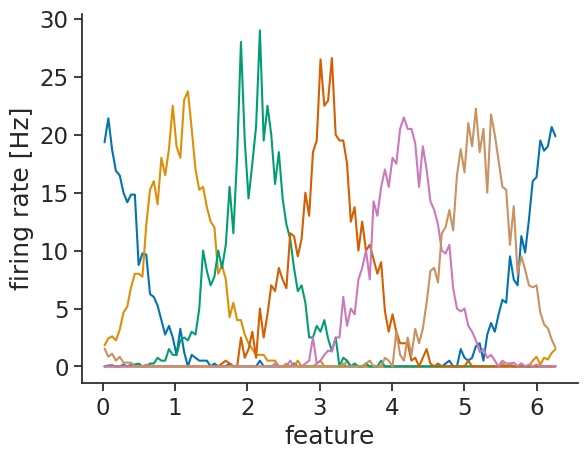

tuning_curves_1d.plot.line(x="feature", add_legend=False)

plt.ylabel("firing rate [Hz]");

You can either customize the plot labels yourself using matplotlib,

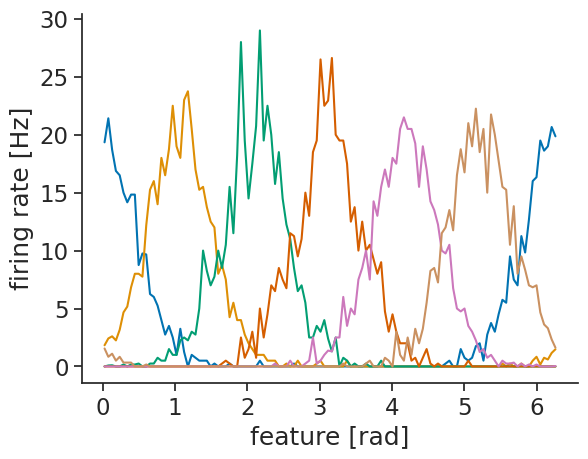

or you can set them in the tuning curve object:

tuning_curves_1d.name = "firing rate"

tuning_curves_1d.attrs["unit"] = "Hz"

tuning_curves_1d.coords["feature"].attrs["unit"] = "rad"

tuning_curves_1d.plot.line(x="feature", add_legend=False);

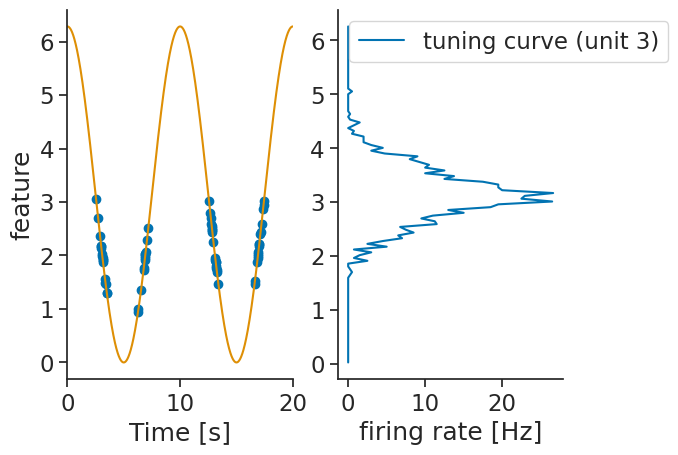

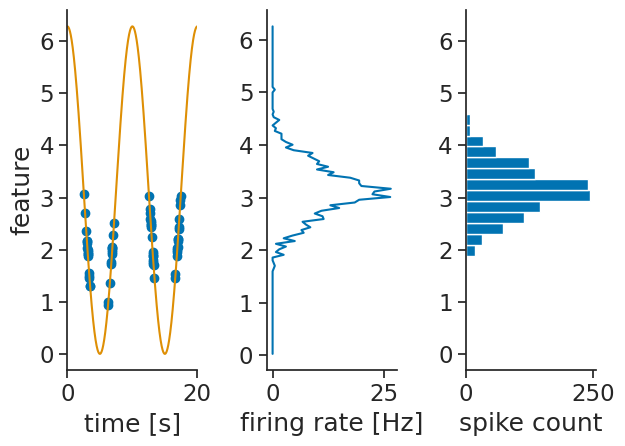

Internally, the compute_tuning_curves calls the value_from method which maps timestamps to their closest values in time from a Tsd object.

It is then possible to validate the tuning curves by displaying the timestamps as well as their associated values.

It is also possible to just get the spike counts per bins. This can be done by setting the argument return_counts=True.

The output is also a xarray.DataArray with the same dimensions as the tuning curves.

spike_counts = nap.compute_tuning_curves(

data=tsgroup_1d,

features=feature,

bins=30,

range=(0, 2*np.pi),

feature_names=["feature"],

return_counts=True

)

2D tuning curves from spikes#

Now, let us simulate some spiking units modulated by a 2D circular variable:

features = nap.TsdFrame(

t=times_feature,

d=np.stack(

[

np.cos(2 * np.pi * times_feature / 10),

np.sin(2 * np.pi * times_feature / 10),

],

axis=1,

),

columns=["a", "b"],

)

features_interp = features.interpolate(nap.Ts(np.arange(0, T, dt_spikes)))

alpha = (

np.arctan2(features_interp["b"].values, features_interp["a"].values)

/ np.pi

)

N = 6

centers_2d = np.linspace(-1, 1, N)

rates_2d = (

max_rate

* np.exp(50.0 * np.cos(alpha[:, np.newaxis] - centers_2d))

/ np.exp(50.0)

)

tsgroup_2d = nap.TsGroup(

{

i + 1: nap.Ts(

features_interp.t[

np.random.poisson(rates_2d[:, i] * dt_spikes) > 0

]

)

for i in range(N)

},

)

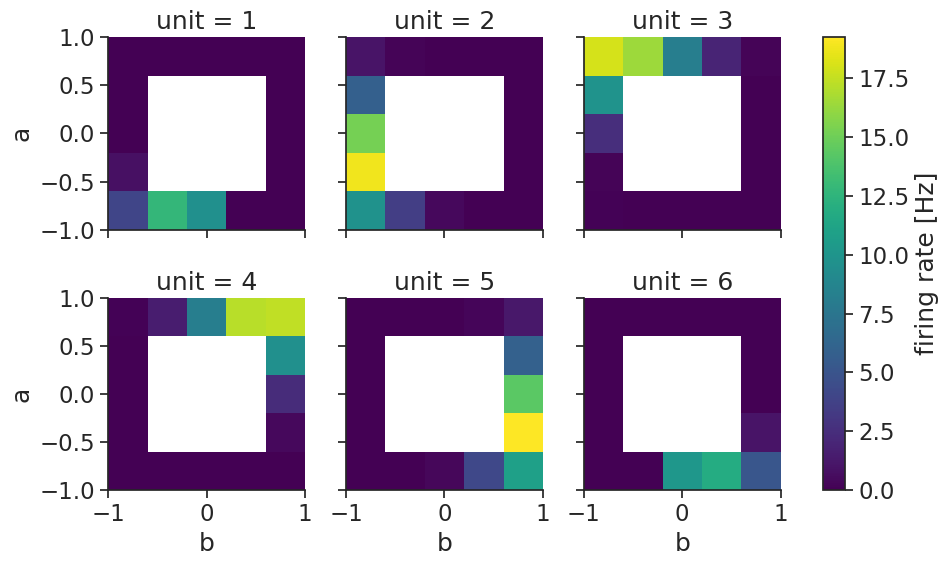

If you pass more than 1 feature, a multi-dimensional tuning curve is computed:

tuning_curves_2d = nap.compute_tuning_curves(

data=tsgroup_2d,

features=features,

bins=(5,5),

range=[(-1, 1), (-1, 1)],

feature_names=["a", "b"]

)

tuning_curves_2d

<xarray.DataArray (unit: 6, a: 5, b: 5)> Size: 1kB

array([[[ 3.95454545, 12.74285714, 9.54545455, 0. ,

0. ],

[ 0.8 , nan, nan, nan,

0. ],

[ 0.06060606, nan, nan, nan,

0. ],

[ 0. , nan, nan, nan,

0. ],

[ 0. , 0. , 0. , 0. ,

0. ]],

[[ 9.81818182, 3.45714286, 0.42424242, 0. ,

0. ],

[18.8 , nan, nan, nan,

0. ],

[15.18181818, nan, nan, nan,

0. ],

[ 5.8 , nan, nan, nan,

0. ],

[ 1.04545455, 0.2 , 0. , 0. ,

...

10.86363636],

[ 0. , nan, nan, nan,

19.22857143],

[ 0. , nan, nan, nan,

14.33333333],

[ 0. , nan, nan, nan,

5.91428571],

[ 0. , 0. , 0.03030303, 0.22857143,

1.27272727]],

[[ 0. , 0. , 10.18181818, 11.85714286,

5.13636364],

[ 0. , nan, nan, nan,

1. ],

[ 0. , nan, nan, nan,

0.06060606],

[ 0. , nan, nan, nan,

0. ],

[ 0. , 0. , 0. , 0. ,

0. ]]])

Coordinates:

* unit (unit) int64 48B 1 2 3 4 5 6

* a (a) float64 40B -0.8 -0.4 1.11e-16 0.4 0.8

* b (b) float64 40B -0.8 -0.4 1.11e-16 0.4 0.8

Attributes: (4)tuning_curve_2d is a again an xarray.DataArray but now with three dimensions:

one for the units of TsGroup and 2 for the features, the coordinates contain the centers of the bins.

Bins that have never been visited by the feature have been assigned a NaN value.

Two-dimensional tuning curves can also easily be visualized:

tuning_curves_2d.name="firing rate"

tuning_curves_2d.attrs["unit"]="Hz"

tuning_curves_2d.plot(col="unit", col_wrap=3);

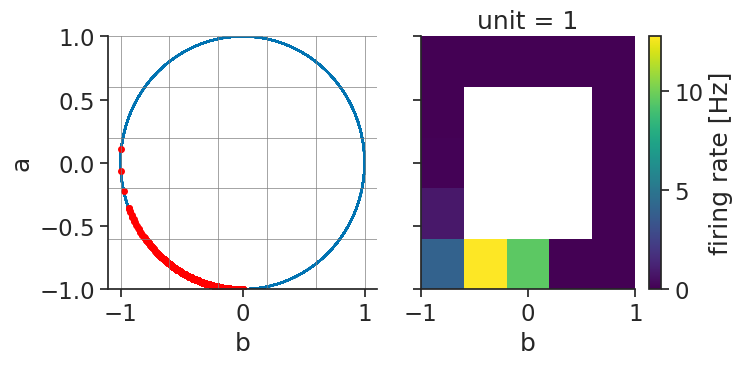

Verifying the accuracy of the tuning curves can once more be done by displaying the spikes aligned

to the features with the function value_from which assign to each spikes the corresponding features value for unit 0.

ts_to_features = tsgroup_2d[1].value_from(features)

ts_to_features

Time (s) a b

------------------ --------- ----------

5.038 -0.999684 -0.0251301

5.048 -0.999684 -0.0251301

5.1000000000000005 -0.998027 -0.0627905

5.21 -0.990461 -0.13779

5.308 -0.982287 -0.187381

5.444 -0.962028 -0.272952

5.446 -0.962028 -0.272952

...

495.434 -0.962028 -0.272952

495.48 -0.954865 -0.297042

495.886 -0.850994 -0.525175

496.03000000000003 -0.79399 -0.60793

496.224 -0.720309 -0.693653

496.442 -0.61786 -0.786288

496.58 -0.546394 -0.837528

dtype: float64, shape: (878, 2)

tsgroup[0] which is a Ts object has been transformed to a TsdFrame object with each timestamps (spike times) being associated with a features value.

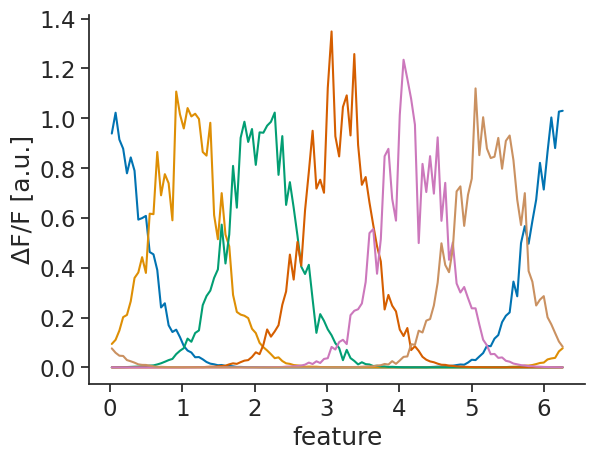

1D tuning curves from continuous activity#

We do not always have spikes. Sometimes we are analysing continuous firing rates or calcium intensities. As an example, we will simulate noisy continuous activity for some units modulated by the 1D variable:

noise_level = 2.0

traces = np.exp(-10 * (np.sin((feature.d[:, np.newaxis] - centers) / 2)) ** 2)

traces = traces * (1 + noise_level * np.random.randn(*traces.shape))

tsdframe_1d = nap.TsdFrame(t=times_feature, d=traces)

The same function can take a Tsd or TsdFrame as data and compute tuning curves for

continuous data:

tuning_curves_1d_continuous = nap.compute_tuning_curves(

data=tsdframe_1d,

features=feature,

bins=120,

range=(0, 2*np.pi),

feature_names=["feature"]

)

tuning_curves_1d_continuous

<xarray.DataArray (unit: 6, feature: 120)> Size: 6kB

array([[9.38720409e-01, 1.02225701e+00, 9.14622643e-01, 8.77904630e-01,

7.79097314e-01, 8.42948667e-01, 7.89518425e-01, 5.93358204e-01,

5.99373000e-01, 6.08259387e-01, 4.63251093e-01, 4.53872965e-01,

3.91117062e-01, 2.40736626e-01, 2.57588065e-01, 1.69177884e-01,

1.42270681e-01, 1.51591175e-01, 1.21484514e-01, 8.61897377e-02,

6.78781079e-02, 5.99475179e-02, 4.09834113e-02, 4.16653987e-02,

3.27079665e-02, 2.19069546e-02, 1.52449577e-02, 1.26420059e-02,

8.89814536e-03, 9.83031773e-03, 6.31494913e-03, 5.87644575e-03,

4.55982351e-03, 1.86888908e-03, 2.10457270e-03, 1.49637642e-03,

1.11190444e-03, 1.20579860e-03, 1.04977403e-03, 6.76915149e-04,

5.77005947e-04, 3.52153472e-04, 2.62949102e-04, 3.29961178e-04,

2.10472528e-04, 2.16033983e-04, 1.09420864e-04, 1.57826985e-04,

1.33804882e-04, 9.96989086e-05, 6.69019925e-05, 7.67036435e-05,

4.21650746e-05, 5.63957292e-05, 6.65474252e-05, 4.70484639e-05,

5.16758503e-05, 5.16007090e-05, 2.93554873e-05, 2.86630367e-05,

5.61873452e-05, 3.73015881e-05, 4.98403085e-05, 5.26005450e-05,

5.31549097e-05, 6.17038177e-05, 5.53469097e-05, 6.86254759e-05,

6.51163351e-05, 7.70840879e-05, 8.09089659e-05, 9.54494636e-05,

1.43938584e-04, 2.20826863e-04, 1.91209437e-04, 2.14487481e-04,

2.68676371e-04, 2.50923508e-04, 4.37989374e-04, 5.60629658e-04,

...

4.90824447e-05, 4.19033274e-05, 4.83109141e-05, 4.31592881e-05,

5.22728396e-05, 5.67459334e-05, 5.71993944e-05, 6.41012056e-05,

4.35988037e-05, 8.10646827e-05, 1.17489123e-04, 1.31682241e-04,

6.95123460e-05, 1.46203422e-04, 3.20621782e-04, 2.33601473e-04,

2.25188736e-04, 3.10807081e-04, 3.37790303e-04, 4.18818236e-04,

6.39583833e-04, 4.34533663e-04, 6.14186221e-04, 1.53802937e-03,

1.77200564e-03, 1.78447988e-03, 2.77582813e-03, 3.59706237e-03,

4.39434682e-03, 4.76224818e-03, 7.63833896e-03, 9.00988441e-03,

1.39735489e-02, 1.17168796e-02, 2.52302254e-02, 1.45642723e-02,

2.79963412e-02, 4.25018607e-02, 4.42486018e-02, 9.33113548e-02,

9.08543424e-02, 1.47630851e-01, 1.40365469e-01, 1.87918699e-01,

1.94258829e-01, 2.48830053e-01, 3.40649911e-01, 4.98226999e-01,

4.11424102e-01, 3.82298971e-01, 4.76564372e-01, 7.06537898e-01,

7.26753906e-01, 5.68165623e-01, 6.92592827e-01, 7.57487809e-01,

1.11991491e+00, 8.52002429e-01, 1.00420560e+00, 8.78509805e-01,

8.40364855e-01, 8.45734594e-01, 9.21103506e-01, 7.97566732e-01,

9.09295890e-01, 9.30791698e-01, 8.31576350e-01, 6.73170524e-01,

5.71773348e-01, 6.99545012e-01, 3.86958167e-01, 3.43951894e-01,

2.48665992e-01, 2.72118277e-01, 2.86197338e-01, 2.01476888e-01,

1.72787445e-01, 1.38115975e-01, 1.04171259e-01, 8.31561510e-02]])

Coordinates:

* unit (unit) int64 48B 0 1 2 3 4 5

* feature (feature) float64 960B 0.02618 0.07854 0.1309 ... 6.152 6.205 6.257

Attributes: (3)tuning_curves_1d_continuous.name="ΔF/F"

tuning_curves_1d_continuous.attrs["unit"]="a.u."

tuning_curves_1d_continuous.plot.line(x="feature", add_legend=False);

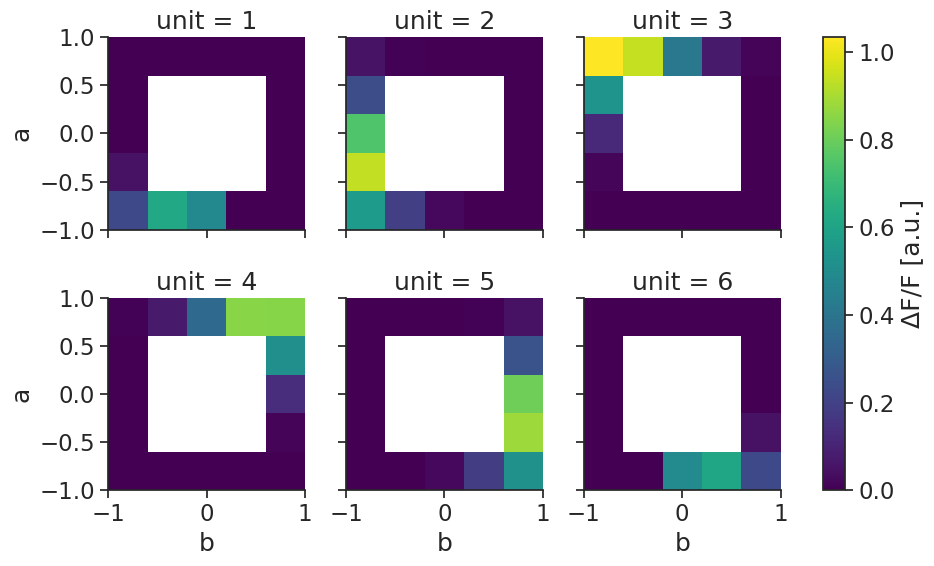

2D tuning curves from continuous activity#

This also works with more than one feature. Let us first simulate noisy continuous activity for some units modulated by the 2D variable:

alpha = np.arctan2(features["b"].values, features["a"].values) / np.pi

traces = np.exp(50.0 * np.cos(alpha[:, np.newaxis] - centers_2d)) / np.exp(50.0)

traces = traces * (1 + noise_level * np.random.randn(*traces.shape))

tsdframe_2d = nap.TsdFrame(t=times_feature, d=traces, columns=range(1, N+1, 1))

The same function again handles computing the tuning curves:

tuning_curves_2d_continuous = nap.compute_tuning_curves(

data=tsdframe_2d,

features=features,

bins=5,

feature_names=["a", "b"]

)

tuning_curves_2d_continuous

<xarray.DataArray (unit: 6, a: 5, b: 5)> Size: 1kB

array([[[2.26212401e-01, 6.19374194e-01, 4.84757575e-01, 4.38574553e-28,

5.36643222e-26],

[4.48196489e-02, nan, nan, nan,

3.72192973e-23],

[3.39549041e-03, nan, nan, nan,

3.01484835e-20],

[1.05086269e-04, nan, nan, nan,

2.73182494e-17],

[2.04000826e-06, 5.86521575e-08, 2.62095115e-10, 1.10398866e-12,

2.61930755e-15]],

[[5.63930683e-01, 1.93092263e-01, 2.05447918e-02, 1.89726253e-19,

2.24215701e-17],

[9.38834247e-01, nan, nan, nan,

1.14398631e-14],

[7.50936457e-01, nan, nan, nan,

4.41464807e-12],

[2.40464031e-01, nan, nan, nan,

1.23338122e-09],

[4.93513529e-02, 7.21050313e-03, 2.85136889e-04, 4.91355560e-06,

...

5.18568011e-01],

[8.64025762e-15, nan, nan, nan,

8.81870717e-01],

[3.60631093e-12, nan, nan, nan,

8.07420616e-01],

[1.32791297e-09, nan, nan, nan,

2.65495721e-01],

[6.50053490e-08, 5.02479539e-06, 2.99742967e-04, 7.68450734e-03,

4.47077649e-02]],

[[4.55358174e-26, 4.16474165e-28, 4.89566725e-01, 6.11745370e-01,

2.26723474e-01],

[3.64526586e-23, nan, nan, nan,

4.72418787e-02],

[2.83865672e-20, nan, nan, nan,

2.89900805e-03],

[2.30917003e-17, nan, nan, nan,

1.03700592e-04],

[2.33202968e-15, 1.13693514e-12, 3.17245188e-10, 6.23075679e-08,

2.13497230e-06]]])

Coordinates:

* unit (unit) int64 48B 1 2 3 4 5 6

* a (a) float64 40B -0.8 -0.4 1.11e-16 0.4 0.8

* b (b) float64 40B -0.8 -0.4 1.11e-16 0.4 0.8

Attributes: (3)tuning_curves_2d_continuous.name="ΔF/F"

tuning_curves_2d_continuous.attrs["unit"]="a.u."

tuning_curves_2d_continuous.plot(col="unit", col_wrap=3);

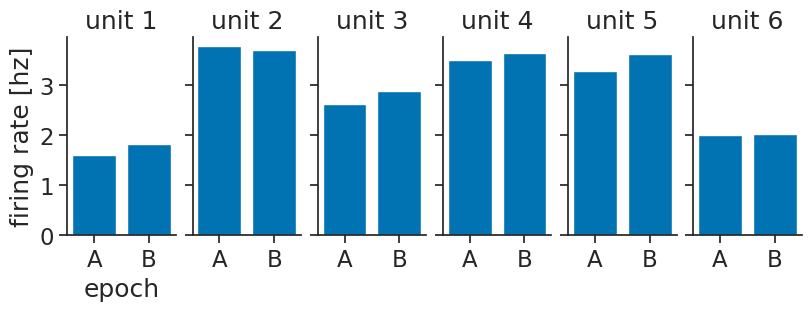

From epochs#

When computing from epochs, you should store them in a dictionary:

epochs_dict = {

"A": nap.IntervalSet(start=[0, 20, 40, 60, 80], end=[10, 29, 49, 69, 89]),

"B":nap.IntervalSet(start=[10, 30, 40, 60, 90], end=[19, 39, 59, 79, 99])

}

You can then compute the tuning curves using nap.compute_response_per_epoch.

You can pass either a TsGroup for spikes, or a TsdFrame for rates/calcium activity.

The output is an xarray.DataArray with labeled dimensions:

epochs_tuning_curves = nap.compute_response_per_epoch(tsgroup_2d, epochs_dict)

epochs_tuning_curves

<xarray.DataArray (unit: 6, epochs: 2)> Size: 96B

array([[1.60869565, 1.83076923],

[3.7826087 , 3.70769231],

[2.63043478, 2.87692308],

[3.5 , 3.64615385],

[3.2826087 , 3.63076923],

[2. , 2.03076923]])

Coordinates:

* unit (unit) int64 48B 1 2 3 4 5 6

* epochs (epochs) <U1 8B 'A' 'B'We can visualize using barplots:

fig, axs = plt.subplots(

1, N, constrained_layout=True, sharey=True, figsize=(8, 3)

)

for unit, ax in zip(epochs_tuning_curves.coords["unit"], axs):

ax.bar(

epochs_tuning_curves.coords["epochs"],

epochs_tuning_curves.sel(unit=unit),

)

ax.set_title(f"unit {unit.item()}")

axs[0].set_xlabel("epoch")

axs[0].set_ylabel("firing rate [hz]");

Error bars#

Often, you will want error bars on your tuning curves, to be able to quantify uncertainty. Pynapple does not provide explicit functions for this, but in this section we will show how you can easily compute error bars yourself, using the functions we introduced above.

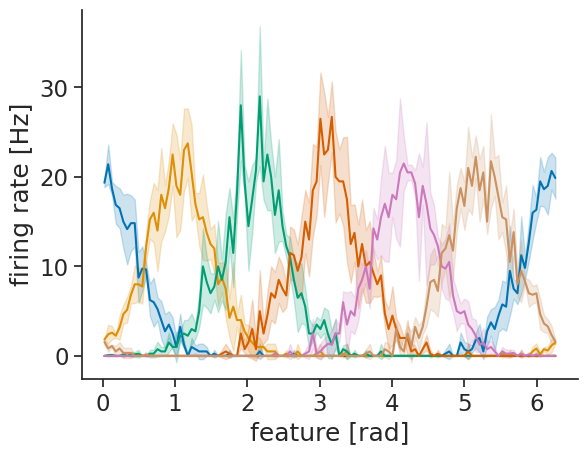

From timestamps or continuous activity#

If you are computing tuning curves against features, you can split your session into n_splits,

compute a tuning curve per split, and then compute statistics over those.

We will start by creating splits:

n_splits = 4

full_session = feature.time_support

split_length = full_session.tot_length() / n_splits

splits = full_session.split(split_length)

splits

index start end

0 0 124.995

1 124.995 249.99

2 249.99 374.985

3 374.985 499.98

shape: (4, 2), time unit: sec.

Then, we can compute the tuning curves like before, by looping over the splits:

tuning_curves_per_split = [

nap.compute_tuning_curves(

tsgroup_1d,

epochs=split,

features=feature,

bins=120,

range=(0, 2 * np.pi),

feature_names=["feature"],

)

for split in splits

]

To make things easier down the line, we advise combining these into one big

xarray.DataArray using xarray.concat

, adding a dimension for the splits:

tuning_curves_per_split = xr.concat(tuning_curves_per_split, dim="split")

tuning_curves_per_split

<xarray.DataArray (split: 4, unit: 6, feature: 120)> Size: 23kB

array([[[18.64640884, 21.33333333, 19.2 , ..., 16.8 ,

17.66666667, 17.3553719 ],

[ 1.93370166, 2.33333333, 3.6 , ..., 0.8 ,

0.33333333, 1.51515152],

[ 0. , 0.33333333, 0. , ..., 0. ,

0. , 0. ],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0.13774105],

[ 1.24309392, 1. , 1.2 , ..., 4.4 ,

3.66666667, 1.37741047]],

[[19.97245179, 18. , 18.4 , ..., 18.4 ,

23. , 23.61878453],

[ 1.92837466, 2.33333333, 1.6 , ..., 0. ,

0.66666667, 1.51933702],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ],

[ 0. , 0. , 0. , ..., 0. ,

...

0. , 0. ],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ],

[ 1.51933702, 1.33333333, 1.2 , ..., 2.8 ,

1.33333333, 1.92837466]],

[[19.14600551, 24. , 17.6 , ..., 16.4 ,

22. , 19.39058172],

[ 1.65289256, 1. , 2.8 , ..., 1.2 ,

2.33333333, 1.66204986],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ],

[ 1.37741047, 0.33333333, 1.2 , ..., 2.4 ,

1.33333333, 1.38504155]]], shape=(4, 6, 120))

Coordinates:

* unit (unit) int64 48B 1 2 3 4 5 6

* feature (feature) float64 960B 0.02618 0.07854 0.1309 ... 6.152 6.205 6.257

Dimensions without coordinates: split

Attributes: (4)Computing the mean and standard deviation can then be done easily using:

means = tuning_curves_per_split.mean(dim="split")

stds = tuning_curves_per_split.std(dim="split")

Visualizing also becomes more simple:

lines = means.plot.line(x="feature", add_legend=False)

for line, unit in zip(lines, means.coords["unit"]):

mean = means.sel(unit=unit)

std = stds.sel(unit=unit)

plt.fill_between(

means["feature"],

mean - std,

mean + std,

color=line.get_color(),

alpha=0.2,

)

plt.xlabel("feature [rad]")

plt.ylabel("firing rate [Hz]");

To make things easier in the future, here is a function that does all of this:

def compute_tuning_curves_with_error_bars(

data, features, bins, range, feature_names, n_splits

):

# Get splits

full_session = features.time_support

split_length = full_session.tot_length() / n_splits

splits = full_session.split(split_length)

# Compute tuning curves per split

tuning_curves_per_split = [

nap.compute_tuning_curves(

data,

features=features,

epochs=split,

bins=bins,

range=range,

feature_names=feature_names,

)

for split in splits

]

tuning_curves_per_split = xr.concat(tuning_curves_per_split, dim="split")

# Return mean and standard deviation

return (

tuning_curves_per_split.mean(dim="split"),

tuning_curves_per_split.std(dim="split")

)

Feel free to extend it to your needs!

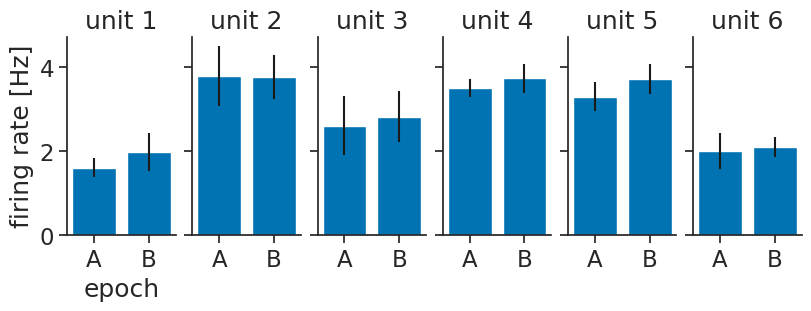

From epochs#

If you want error bars for epochs, the typical use-case will be that you have multiple presentations of a stimulus, and you want the mean response over those:

epochs_dict

{'A': index start end

0 0 10

1 20 29

2 40 49

3 60 69

4 80 89

shape: (5, 2), time unit: sec.,

'B': index start end

0 10 19

1 30 39

2 40 59

3 60 79

4 90 99

shape: (5, 2), time unit: sec.}

So, we can use nap.compute_response_per_epoch in a loop to compute that:

epochs_tuning_curves_per_presentation = [

nap.compute_response_per_epoch(

tsgroup_2d, {"A": stimulus_A, "B": stimulus_B}

)

for stimulus_A, stimulus_B in zip(epochs_dict["A"], epochs_dict["B"])

]

To make things easier down the line, we advise combining these into one big

xarray.DataArray using xarray.concat

, adding a dimension for the presentations:

epochs_tuning_curves_per_presentation = xr.concat(

epochs_tuning_curves_per_presentation, dim="presentation"

)

epochs_tuning_curves_per_presentation

<xarray.DataArray (presentation: 5, unit: 6, epochs: 2)> Size: 480B

array([[[1.7 , 2.66666667],

[3.7 , 3.11111111],

[3.9 , 2.77777778],

[3.2 , 3.55555556],

[2.8 , 4.33333333],

[1.9 , 2.44444444]],

[[1.55555556, 2.11111111],

[4.44444444, 4.55555556],

[2.11111111, 3.44444444],

[3.55555556, 4.33333333],

[3.77777778, 3.66666667],

[1.55555556, 2. ]],

[[1.22222222, 1.31578947],

[2.55555556, 3.31578947],

[2.77777778, 2.47368421],

[3.33333333, 3.42105263],

[3.55555556, 3.63157895],

[1.55555556, 1.73684211]],

[[1.88888889, 1.68421053],

[4.55555556, 3.78947368],

[2.22222222, 3.52631579],

[3.66666667, 3.47368421],

[3.11111111, 3.21052632],

[2.44444444, 2.05263158]],

[[1.66666667, 2.11111111],

[3.66666667, 4.11111111],

[2. , 1.88888889],

[3.77777778, 3.88888889],

[3.22222222, 3.77777778],

[2.55555556, 2.22222222]]])

Coordinates:

* unit (unit) int64 48B 1 2 3 4 5 6

* epochs (epochs) <U1 8B 'A' 'B'

Dimensions without coordinates: presentationWe can then visualize again, but now with error bars:

means = epochs_tuning_curves_per_presentation.mean(dim="presentation")

stds = epochs_tuning_curves_per_presentation.std(dim="presentation")

fig, axs = plt.subplots(

1, N, constrained_layout=True, sharey=True, figsize=(8, 3)

)

for unit, ax in zip(epochs_tuning_curves.coords["unit"], axs):

ax.bar(

means.coords["epochs"], means.sel(unit=unit), yerr=stds.sel(unit=unit)

)

ax.set_title(f"unit {unit.item()}")

axs[0].set_xlabel("epoch")

axs[0].set_ylabel("firing rate [Hz]");

To make things easier in the future, here is a function that does all of this:

def compute_response_per_epoch_with_error_bars(data, epochs_dict):

epochs_dict_per_presentation = [

dict(zip(epochs_dict.keys(), presentations))

for presentations in zip(*epochs_dict.values())

]

epochs_tuning_curves_per_presentation = [

nap.compute_response_per_epoch(data, presentation_epochs_dict)

for presentation_epochs_dict in epochs_dict_per_presentation

]

epochs_tuning_curves_per_presentation = xr.concat(

epochs_tuning_curves_per_presentation, dim="presentation"

)

return (

epochs_tuning_curves_per_presentation.mean(dim="presentation"),

epochs_tuning_curves_per_presentation.std(dim="presentation"),

)

Feel free to extend it to your needs!

Mutual information#

Given a set of tuning curves, you can use compute_mutual_information to compute the mutual information between the activity of the neurons and the features, no matter what dimension.

See the Skaggs et al. (1992) paper for more information on what mutual information computes.

MI = nap.compute_mutual_information(tuning_curves_1d)

MI

| bits/sec | bits/spike | |

|---|---|---|

| 1 | 7.606542 | 1.007182 |

| 2 | 5.401980 | 1.521624 |

| 3 | 5.687713 | 2.240932 |

| 4 | 5.774442 | 2.343430 |

| 5 | 5.602480 | 2.210835 |

| 6 | 5.434886 | 1.484882 |

MI = nap.compute_mutual_information(tuning_curves_2d)

MI

| bits/sec | bits/spike | |

|---|---|---|

| 1 | 4.210053 | 2.397429 |

| 2 | 6.043978 | 1.733717 |

| 3 | 5.830994 | 1.665932 |

| 4 | 5.828592 | 1.672893 |

| 5 | 6.069050 | 1.700899 |

| 6 | 4.197253 | 2.329126 |

Take a look at the tutorial on head direction cells for a realistic example.